python生成職業要求詞雲

阿新 • • 發佈:2017-08-10

經驗 asc matplot plot 數據 如圖所示 [] show print

接著上篇的說的,爬取了大數據相關的職位信息,http://www.17bigdata.com/jobs/。

# -*- coding: utf-8 -*- """ Created on Thu Aug 10 07:57:56 2017 @author: lenovo """ from wordcloud import WordCloud import pandas as pd import numpy as np import matplotlib.pyplot as plt import jieba def cloud(root,name,stopwords): filepath = root +‘\\‘ + name f = open(filepath,‘r‘,encoding=‘utf-8‘) txt = f.read() f.close() cut = jieba.cut(txt) words = [] for i in cut: words.append(i) df = pd.DataFrame({‘words‘:words}) s= df.groupby(df[‘words‘])[‘words‘].agg([(‘size‘,np.size)]).sort_values(by=‘size‘,ascending=False) s= s[~s.index.isin(stopwords[‘stopword‘])].to_dict() wordcloud = WordCloud(font_path =r‘E:\Python\machine learning\simhei.ttf‘,background_color=‘black‘) wordcloud.fit_words(s[‘size‘]) plt.imshow(wordcloud) pngfile = root +‘\\‘ + name.split(‘.‘)[0] + ‘.png‘ wordcloud.to_file(pngfile)import os jieba.load_userdict(r‘E:\Python\machine learning\NLPstopwords.txt‘) stopwords = pd.read_csv(r‘E:\Python\machine learning\StopwordsCN.txt‘,encoding=‘utf-8‘,index_col=False) for root,dirs,file in os.walk(r‘E:\職位信息‘): for name in file: if name.split(‘.‘)[-1]==‘txt‘: print(name) cloud(root,name,stopwords)

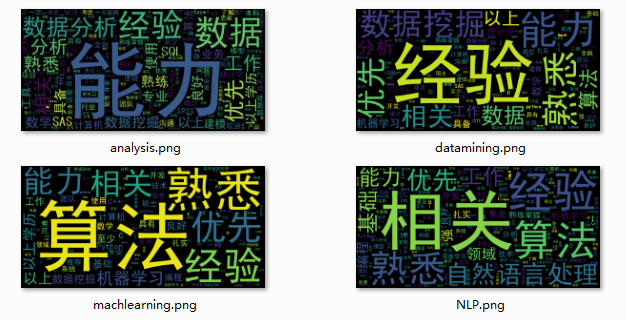

詞雲如圖所示:

可以看出有些噪聲詞沒能被去除,比如相關、以上學歷等無效詞匯。本想通過DF判斷停用詞,但是我爬的時候沒顧及到這個問題,外加本身記錄數也不高,就沒再找職位信息的停用詞。當然也可看出算法和經驗是很重要的。加油

python生成職業要求詞雲