Storm學習記錄(三、Storm叢集搭建)

阿新 • • 發佈:2019-01-13

一、單機搭建

1.上傳並解壓jar包

2.在storm目錄下建立logs目錄,以儲存程式執行時的資訊

mkdir logs3.在bin目錄下執行命令,啟動zookeeper

./storm dev-zookeeper >> ../logs/dev-zookeeper.out 2>&1 &4.啟動nimbus

./storm nimbus >> ../logs/nimbus.out 2>&1 &5.啟動supervisor

./storm supervisor >> ../logs/supervisor.out 2>&1 &

6.啟動UI介面

./storm ui >> ../logs/ui.out 2>&1 &7.開啟ui介面

http://192.168.30.141:8080/index.html

8.改造提交主類

Config conf = new Config(); if (args.length>0){ try { StormSubmitter.submitTopology(args[0],conf,tb.createTopology()); } catch (AlreadyAliveException e) { e.printStackTrace(); } catch (InvalidTopologyException e) { e.printStackTrace(); } }else { LocalCluster lc =new LocalCluster(); lc.submitTopology("wordcount",conf,tb.createTopology()); }

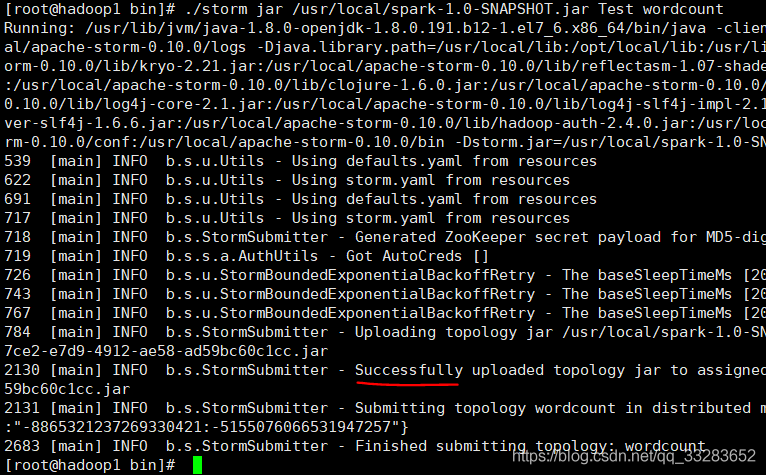

9.將專案打包並執行jar包

jar包路徑+主類的絕對路徑+任務名(隨意取)

./storm jar /usr/local/spark-1.0-SNAPSHOT.jar Test wordcount

二、叢集搭建

| Nimbus | Supervisor | Zookeeper | |

| hadoop1 | 1 | 1 | |

| hadoop2 | 1 | 1 | |

| hadoop3 | 1 | 1 |

1.上傳並解壓jar包

2.在storm目錄下新建資料夾logs

3.修改conf下的storm.yaml

storm.zookeeper.servers:

- "hadoop1"

- "hadoop2"

- "hadoop3"

nimbus.host: "hadoop1"

storm.local.dir: "/tmp/storm"

supervisor.slots.ports:

- 6700

- 6701

- 6702

- 6703

4.分發到其他機子上

scp -r storm-0.10.0/ hadoop3:/usr/local/5.啟動zookeeper

6.在hadoop1啟動nimbus和ui介面

./bin/storm nimbus >> ./logs/nimbus.out 2>&1 &./bin/storm ui >> ./logs/ui.out 2>&1 &7.在hadoop2,hadoop3啟動supervisor

./bin/storm supervisor >> ./logs/supervisor.out 2>&1 &8.提交任務:可以在從節點提交任務,但最後任務都會提交到主節點,任務資訊也會儲存在主節點中

./storm jar /usr/local/spark-1.0-SNAPSHOT.jar Test wordcount9.調節命令

調節worker個數,executor個數

//Topology名為wordcount的worker個數改為4,名為wcountbolt的executor的執行緒個數改為4

./bin/storm rebalance wordcount -n 4 -e wcountbolt=4