迴圈神經網路系列(一)Tensorflow中BasicRNNCell

按道理看完RNN的原理之後,我們就應該來用某種框架來實現了。可偏偏在RNN的實現上,對於一個初學者來說Tensorflow的表達總是顯得那麼生澀難懂,比起CNN那確實是差了一點。比如裡面的引數就顯示不是那麼的友好。num_units到底指啥?原諒我最開始以為指的是RNN單元的個數。zero_state()中的引數為啥要指定batch_size?

1.結論

先回憶一下RNN的計算公式:

output_size = 10

batch_size = 32

cell = tf.nn.rnn_cell.BasicRNNCell(num_units=output_size)

input = tf.placeholder(dtype=tf.float32,shape=[batch_size,150])

h0 = cell.zero_state(batch_size=batch_size,dtype=tf.float32)

output,h1 = cell.call(input,h0)

上面是一個RNN單元最簡單的定義形式,可是每個引數又到底是什麼含義呢?我們知道一個最基本的RNN單元中有三個可訓練的引數

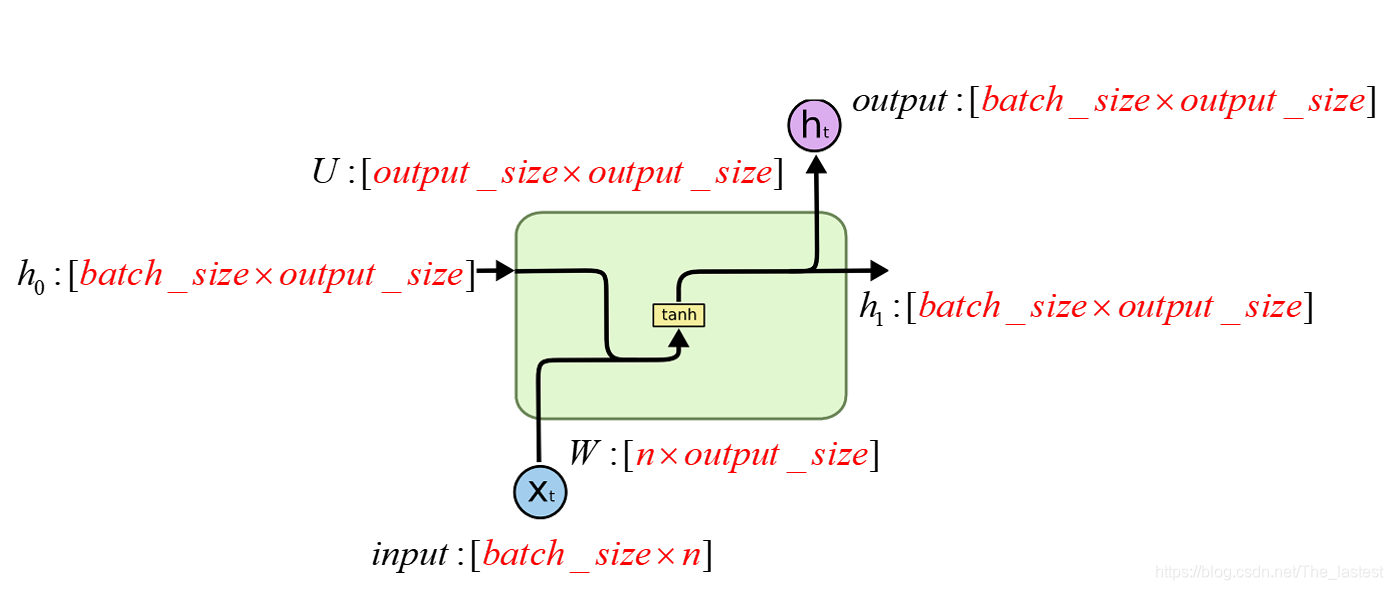

,以及兩個輸入變數。所以我們在構造RNN的時候就需要指定各個引數的維度了。可上面6行程式碼中,各個引數又是誰跟誰呢? 下圖就是直接結果。

結合著上圖和程式碼,可以發現:

第一:第3行程式碼的num_units=output_size就告訴我們,最終輸出的類別數是output_size(例如:10個數字的可能性;),以及引數

的第二個維度為output_size;

第二:第4行程式碼的shape=[batch_size,150]就告訴了我們餘下所有引數的形狀;

2.怎麼來的

class BasicRNNCell(RNNCell):

"""The most basic RNN cell.

Args:

num_units: int, The number of units in the RNN cell.

activation: Nonlinearity to use. Default: `tanh`.

reuse: (optional) Python boolean describing whether to reuse variables

in an existing scope. If not `True`, and the existing scope already has

the given variables, an error is raised.

"""

def __init__(self, num_units, activation=None, reuse=None):

super(BasicRNNCell, self).__init__(_reuse=reuse)

self._num_units = num_units

self._activation = activation or math_ops.tanh

@property

def state_size(self):

return self._num_units

@property

def output_size(self):

return self._num_units

def call(self, inputs, state):

"""Most basic RNN: output = new_state = act(W * input + U * state + B)."""

output = self._activation(_linear([inputs, state], self._num_units, True))

return output, output

def _linear(args,

output_size,

bias,

bias_initializer=None,

kernel_initializer=None):

-------此處刪除了很多行--------------------------

with vs.variable_scope(outer_scope) as inner_scope:

inner_scope.set_partitioner(None)

if bias_initializer is None:

bias_initializer = init_ops.constant_initializer(0.0, dtype=dtype)

biases = vs.get_variable(

_BIAS_VARIABLE_NAME, [output_size],

dtype=dtype,

initializer=bias_initializer)

return nn_ops.bias_add(res, biases)

從Class BasicRNNCell的原始碼第22行可以看出num_units和output_size是一回事;從第43行可以看出,output_size指的是偏置B的維度,只要弄清楚了這兩點,其他的就一目瞭然了

2.驗證

2.1 從計算維度來驗證

import tensorflow as tf

output_size = 10

batch_size = 32

cell = tf.nn.rnn_cell.BasicRNNCell(num_units=output_size)

print(cell.output_size)

input = tf.placeholder(dtype=tf.float32,shape=[batch_size,150])

h0 = cell.zero_state(batch_size=batch_size,dtype=tf.float32)

output,h1 = cell.call(input,h0)

print(output)

print(h1)

按照上面的推斷各個引數的維度為:input: [32,150]; W: [150,10]; h0: [32,10]; U: [10,10] B: [10];所以最終輸出的維度就應該為[32,10]

10

Tensor("Tanh:0", shape=(32, 10), dtype=float32)

Tensor("Tanh:0", shape=(32, 10), dtype=float32)

2.1 從計算結果來驗證

import tensorflow as tf

import numpy as np

from tensorflow.python.ops import variable_scope as vs

output_size = 4

batch_size = 3

dim = 5

cell = tf.nn.rnn_cell.BasicRNNCell(num_units=output_size)

input = tf.placeholder(dtype=tf.float32, shape=[batch_size, dim])

h0 = cell.zero_state(batch_size=batch_size, dtype=tf.float32)

output, h1 = cell.call(input, h0)

x = np.array([[1, 2, 1, 1, 1], [2, 0, 0, 1, 1], [2, 1, 0, 1, 0]])

scope = vs.get_variable_scope()

with vs.variable_scope(scope,reuse=True) as outer_scope:

weights = vs.get_variable(

"kernel", [9, output_size],

dtype=tf.float32,

initializer= None)

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

a,b,w= sess.run([output,h1,weights],feed_dict={input:x})

print('output:')

print(a)

print('h1:')

print(b)

print("weights:")

print(w)# shape = (9,4)

state = np.zeros(shape=(3,4))# shape = (3,4)

all_input = np.concatenate((x,state),axis=1)# shape = (3,9)

result = np.tanh(np.matmul(all_input,w))

print('result:')

print(result)

由以上程式碼可知:input: [3,5]; W: [5,4]; h0: [3,4]; U: [4,4] B: [4];所以最終輸出的維度就應該為[3,4]

注:

1.Tensorflow在實現的時候把W,U合併成了一個矩陣,把input和h0也合併成了一個矩陣,所以weight的形狀才為(9,4);

2.此處偏置為0;

結果:

>>

weights:

[[ 0.590749 0.31745368 -0.27975678 0.33500886]

[-0.02256793 -0.34533614 -0.09014118 -0.5189797 ]

[-0.24466929 0.17519772 0.20553339 -0.25595042]

[-0.48579523 0.67465043 0.62406075 -0.32061592]

[-0.0713594 0.3825792 0.6132684 0.00536895]

[ 0.43795645 0.55633724 0.31295568 -0.37173718]

[ 0.6170727 0.14996111 -0.321027 -0.624057 ]

[ 0.42747557 0.4424585 -0.59979784 0.23592204]

[-0.0294565 0.3372593 -0.14695019 0.07108325]]

output:

[[-0.2507479 0.69584984 0.7542856 -0.8549179 ]

[ 0.5541449 0.9344188 0.5900975 0.3405997 ]

[ 0.5870382 0.74615407 -0.0255884 -0.16797088]]

h1:

[[-0.2507479 0.69584984 0.7542856 -0.8549179 ]

[ 0.5541449 0.9344188 0.5900975 0.3405997 ]

[ 0.5870382 0.74615407 -0.0255884 -0.16797088]]

result:

[[-0.25074791 0.69584978 0.75428552 -0.85491791]

[ 0.55414493 0.93441886 0.59009744 0.34059968]

[ 0.58703823 0.74615404 -0.02558841 -0.16797091]]

result是通過numpy計算得到的輸出值!