機器學習實戰 第九章回歸樹錯誤

最近一直在學習《機器學習實戰》這本書。感覺寫的挺好,並且在網上能夠輕易的找到python原始碼。對學習機器學習很有幫助。

最近學到第九章樹迴歸。發現程式碼中一再出現問題。在網上查了下,一般的網上流行的錯誤有兩處。但是我發現原始碼中的錯誤不止這兩處,還有個錯誤在prune裡面,另外模型樹的預測部分也寫的很挫,奇怪的是這本書之前的程式碼基本上都沒有犯過什麼錯誤,這一章的程式碼卻頻繁的出現各種問題,讓人匪夷所思。。

首先是說明書中已經證實的兩個錯誤,都是簡單的語法錯誤

第一個錯誤在程式碼的binSplitDataSet函式中

def binSplitDataSet(dataSet, feature, value) 這裡dataSet[nonzero(dataSet[:,feature] > value)[0],:]確實是把矩陣dataset切分為了兩個矩陣,可是畫蛇添足之處在於後面加了[0],這就代表兩個矩陣都返回了矩陣的第一行。自然是錯的。。改法很簡單,刪掉[0]即可,如下:

def binSplitDataSet 緊接著第二個錯誤在chooseBestSplit裡(58行)

for splitVal in set(dataSet[:,featIndex]):這裡for splitVal in set(dataSet[:,featIndex]):,set傳入引數是一個矩陣,這裡肯定會報語法錯誤,應該改成

for splitValue in set(dataMat[:,feat].T.tolist()[0]):接下來的錯誤是我自己覺得的。網上並沒有看到別的出處:

程式碼的getmean函式

def getMean(tree):

if isTree(tree['right']): tree['right'] = getMean(tree['right'])

if isTree(tree['left']): tree['left'] = getMean(tree['left'])

return (tree['left']+tree['right'])/2.0仔細觀察這個函式。其實並沒有對這個樹求得平均數

比如當tree={‘left’:3,’right’:{‘left’:1,’right’:2}}的時候

呼叫getMean將返回2.25,顯然不等於(1+2+3)/3=2

所以這個程式碼要改就複雜了,我的方法是連建樹的部分一起改了。讓每個節點(葉子節點除外)都包含了一個值代表這個節點下面的葉節點數量,並且還在這個節點上面記錄所有葉節點的和。這樣計算getMean的時候效率也會更高(複雜度變成O(1))

接下來看treeForeCast函式

程式碼124行

if inData[tree['spInd']] > tree['spVal']:這裡又是一個坑爹之處。明顯spInd代表的是列,這裡填入矩陣就變成行了。而且一行矩陣怎麼可能和一個數字比較大小。所以這裡必然應該要改成:

if float(inMat[:,tree['spInd']])>tree['spVal']:然後繼續看modelTreeEval函式。

這個函式也寫的不忍吐槽。。

def modelTreeEval(model, inDat):

n = shape(inDat)[1]

X = mat(ones((1,n+1)))

X[:,1:n+1]=inDat

return float(X*model)這裡model只是一個2行1列的矩陣,你照著書上的寫法,最後return的部分肯定是報錯的,兩個矩陣根本不能相乘。。

改:

def modelTreeEval(model,inMat):

n=inMat.shape[1]

X=mat(ones((1,n)))

X[:,1:n]=inMat[:,:-1]

return float(X*model)暫時就發現這麼多錯誤,後面的畫圖的部分我就沒看了。

我發一下改完後的全部程式碼(run部分的程式碼為自己寫的測試函式,只測了模型樹的預測)

# -*- coding:utf-8 -*-

import math

from numpy import *

import matplotlib.pyplot as plt

def loadDataSet(fileName):

fr=open(fileName)

dataSet=[]

for line in fr.readlines():

items=line.strip().split('\t')

dataSet.append(map(float,items))

return dataSet

def regLeaf(dataMat):

return mean(dataMat[:,-1])

def regErr(dataMat):

return var(dataMat[:,-1])*dataMat.shape[0]

def modelLeaf(dataMat):

ws,X,Y=linearSolve(dataMat)

return ws

def modelErr(dataMat):

ws,X,Y=linearSolve(dataMat)

YHat=X*ws

return sum(power(YHat-Y,2))

def binSplitDataSet(dataMat,feature,value):

mat0=dataMat[nonzero(dataMat[:,feature]>value)[0]]

mat1=dataMat[nonzero(dataMat[:,feature]<=value)[0]]

return mat0,mat1

def chooseBestFeature(dataMat,leafType,errType,ops):

tolS=ops[0];tolN=ops[1]

if len(set(dataMat[:,-1].T.tolist()[0]))==1:

return None,leafType(dataMat)

m,n=shape(dataMat);S=errType(dataMat)

bestS=inf;bestVal=0;bestFeature=0

for feat in range(n-1):

for splitValue in set(dataMat[:,feat].T.tolist()[0]):

mat0,mat1=binSplitDataSet(dataMat,feat,splitValue)

if (mat0.shape[0]<tolN) or (mat1.shape[0]<tolN):

continue

nowErr=errType(mat0)+errType(mat1)

if nowErr<bestS:

bestS=nowErr

bestFeature=feat

bestVal=splitValue

if abs(S-bestS)<tolS:

return None,leafType(dataMat)

mat0,mat1=binSplitDataSet(dataMat,bestFeature,bestVal)

if (mat0.shape[0]<tolN) or (mat1.shape[0]<tolN):

return None,leafType(dataMat)

return bestFeature,bestVal

def isTree(obj):

return (type(obj).__name__=='dict')

def createTree(dataMat,leafType=modelLeaf,errType=modelErr,ops=(1,4)):

feat,val=chooseBestFeature(dataMat,leafType,errType,ops)

if feat==None:

return val

retTree={}

retTree['spInd']=feat

retTree['spVal']=val

leftMat,rightMat=binSplitDataSet(dataMat,feat,val)

retTree['lTree']=createTree(leftMat,leafType,errType,ops)

retTree['rTree']=createTree(rightMat,leafType,errType,ops)

# 建樹的時候計算出每個節點下面的葉子節點數量,並且計算出該節點下面的葉子節點的和

# 方便後剪枝的時候能夠快速的對樹進行塌陷處理

# 此處改動已經和原書中的寫法有了很大不同

if isTree(retTree['lTree']) and isTree(retTree['rTree']):

retTree['leafN']=retTree['lTree']['leafN']+retTree['rTree']['leafN']

retTree['total']=retTree['lTree']['total']+retTree['rTree']['total']

elif (not isTree(retTree['lTree'])) and isTree(retTree['rTree']):

retTree['leafN']=1+retTree['rTree']['leafN']

retTree['total']=retTree['lTree']+retTree['rTree']['total']

elif isTree(retTree['lTree']) and (not isTree(retTree['rTree'])):

retTree['leafN']=retTree['lTree']['leafN']+1

retTree['total']=retTree['lTree']['total']+retTree['rTree']

else:

retTree['leafN']=2

retTree['total']=retTree['lTree']+retTree['rTree']

return retTree

def getMean(tree):

if isTree(tree):

if isTree(tree['lTree']):

tree['lTree']=tree['lTree']['total']

if isTree(tree['rTree']):

tree['rTree']=tree['rTree']['total']

return tree['total']*1.0/tree['leafN']

else:

return tree

def prune(tree,testData):

if testData.shape[0]==0:

return getMean(tree)

if isTree(tree['lTree']) or isTree(tree['rTree']):

lSet,rSet=binSplitDataSet(testData,tree['spInd'],tree['spVal'])

if isTree(tree['lTree']):

tree['lTree']=prune(tree['lTree'],lSet)

if isTree(tree['rTree']):

tree['rTree']=prune(tree['rTree'],rSet)

if not isTree(tree['lTree']) and not isTree(tree['rTree']):

lSet,rSet=binSplitDataSet(testData,tree['spInd'],tree['spVal'])

errNoMerge=sum(power(lSet[:,-1]-tree['lTree'],2))+sum(power(rSet[:,-1]-tree['rTree'],2))

treeMean=tree['total']/tree['leafN']

errMerge=sum(power(testData[:,-1]-treeMean,2))

if errNoMerge<errMerge:

print "merging"

return treeMean

else:

return tree

else:

return tree

def linearSolve(dataMat):

m,n=shape(dataMat)

X=mat(ones((m,n)));Y=mat(zeros((m,1)))

X[:,1:n]=dataMat[:,0:n-1];Y=dataMat[:,-1]

xTx=X.T*X

if linalg.det(xTx)==0.0:

raise NameError("singular matrix")

ws=xTx.I*X.T*Y

return ws,X,Y

# 迴歸樹預測

def regTreeEval(model,inMat):

return float(model)

# 模型樹預測

def modelTreeEval(model,inMat):

n=inMat.shape[1]

X=mat(ones((1,n)))

X[:,1:n]=inMat[:,:-1]

return float(X*model)

def treeForeCast(tree,inMat,modelEval=modelTreeEval):

if not isTree(tree):

return modelEval(tree,inMat)

if float(inMat[:,tree['spInd']])>tree['spVal']:

if not isTree(tree['lTree']):

return modelEval(tree['lTree'],inMat)

else:

return treeForeCast(tree['lTree'],inMat,modelEval)

else:

if not isTree(tree['rTree']):

return modelEval(tree['rTree'],inMat)

else:

return treeForeCast(tree['rTree'],inMat,modelEval)

def createForeCast(tree,testMat,modelEval=modelTreeEval):

m=testMat.shape[0]

yHat=mat(zeros((m,1)))

for i in range(m):

yHat[i]=treeForeCast(tree,testMat[i],modelEval)

return yHat

def run():

dataSet=loadDataSet('bikeSpeedVsIq_train.txt')

testSet=loadDataSet('bikeSpeedVsIq_test.txt')

tree=createTree(mat(dataSet),ops=(1,20))

yHat=createForeCast(tree,mat(testSet))

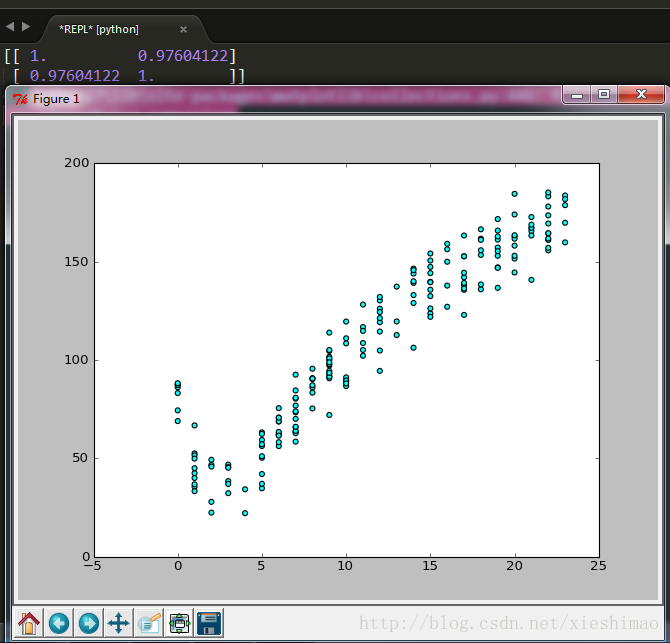

print corrcoef(yHat.T,mat(testSet)[:,1].T)

fig=plt.figure()

ax=fig.add_subplot(111)

ax.scatter(array(dataSet)[:,0],array(dataSet)[:,1],c='cyan',marker='o')

plt.show()

run()最後執行的結果

然後最屌的就是,雖然書中的程式碼錯誤一大堆,居然最後的答案還跟我是一樣的。這才是最騷的。。。

收工!