Tensorflow深度學習筆記(二)--BPNN手寫數字識別視覺化

阿新 • • 發佈:2018-12-09

資料集:MNIST 啟用函式:Relu 損失函式:交叉熵 Optimizer:AdamOptimizer 視覺化工具:tensorboad

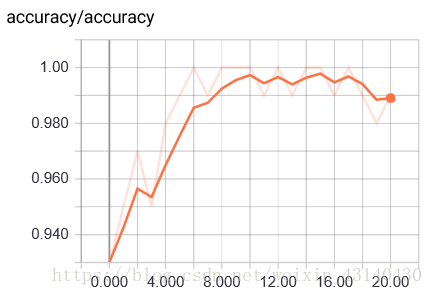

迭代21epoch,accuracy結果如下: Iter 16,Testing Accuracy: 0.9824 ,Training Accuracy: 0.9949273 Iter 17,Testing Accuracy: 0.9822 ,Training Accuracy: 0.99496365 Iter 18,Testing Accuracy: 0.9832 ,Training Accuracy: 0.9950182 Iter 19,Testing Accuracy: 0.983 ,Training Accuracy: 0.99503636 Iter 20,Testing Accuracy: 0.983 ,Training Accuracy: 0.9950727

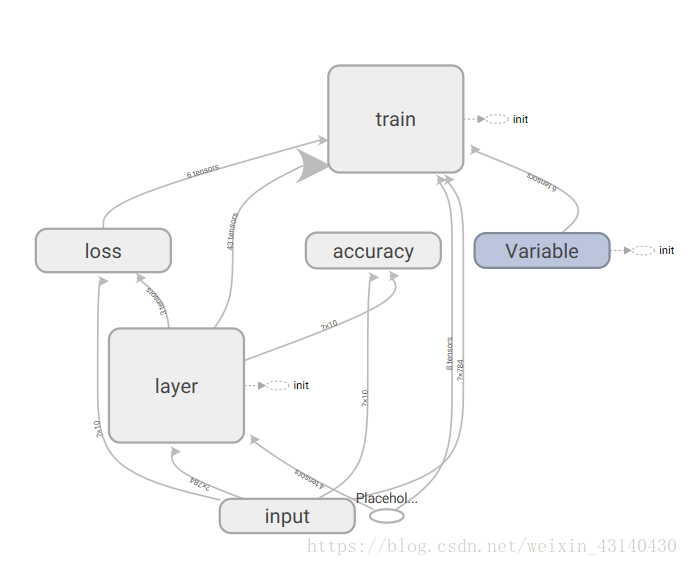

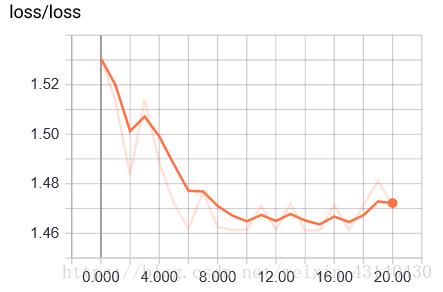

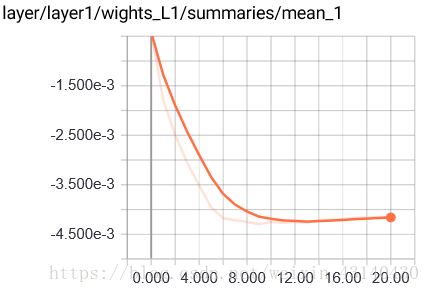

視覺化展示:

Graph:

accuracy變化:

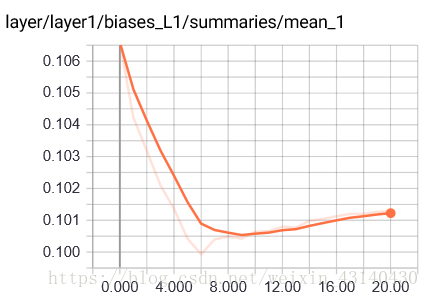

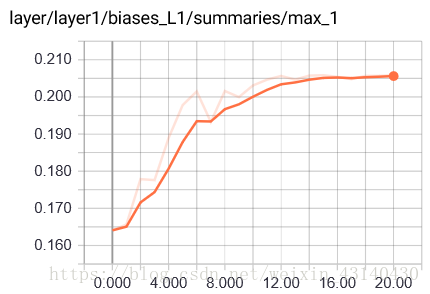

第一層部分引數變化圖:

bias mean值變化

程式碼如下:

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

#讀取資料

mnist = input_data.read_data_sets('MNIST_data',one_hot=True)

batch_size = 100

n_batch = mnist.train.num_examples//batch_size

def