GMM混合高斯背景建模C++結合Opencv實現(內附Matlab實現)

阿新 • • 發佈:2018-11-11

最近在做視訊流檢測方面的工作,一般情況下這種視訊流檢測是較複雜的場景,比如交通監控,或者各種監控攝像頭,場景比較複雜,因此需要構建背景影象,然後去檢測場景中每一幀動態變化的前景部分,GMM高斯模型是建模的一種方法,關於高斯建模的介紹有很多部落格了,大家可以去找一找,本篇部落格主要依賴於上一個老兄,他用matlab實現了GMM模型,我在其基礎上利用C++和OpenCV進行了重寫,下面會給出C++程式碼,希望能給大家一點幫助,本文能力有限,如有問題可以一起交流,一起改進。

先展示結果吧,不要問我的影象是神馬影象,哈哈哈 感覺很low b。 其實都是視訊中的某一幀影象。方法可以用就行啦!!

背景影象:

待檢測影象:

檢測結果:

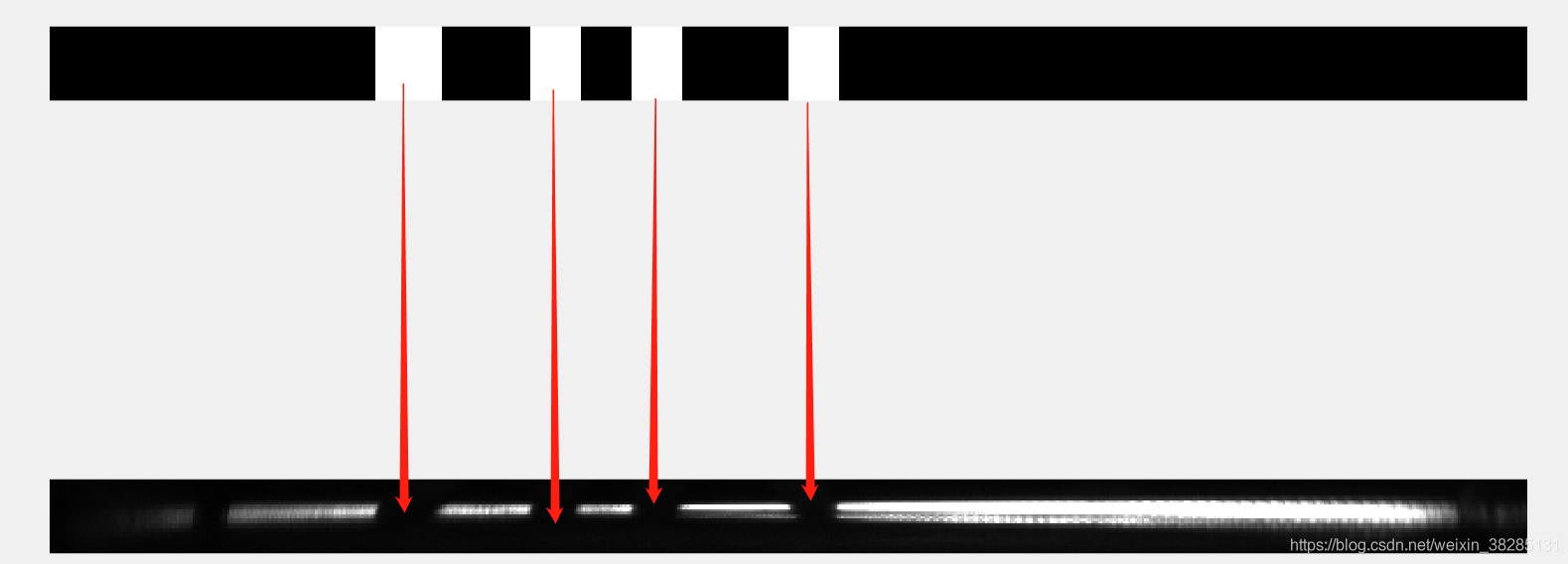

我其實是想檢測出圖片中的缺口,以下是檢測結果,我改變一下形式吧 ,這樣能更清楚的看出結果

其實還是很精準的嘛,來來來,下面上程式碼~

C++結合OpenCV程式碼

//Writen by 蘇丶 2018//11/04

//轉載請附轉載連結

#include<opencv2/opencv.hpp>

#include<opencv2/core.hpp>

#include<math.h>

#include<iostream>

#include<stdio.h>

#define K 3

#define Matlab程式碼:

我主要是針對以為老哥的Matlab程式碼改出來的C++版本,所以把老哥的部落格連結附在下面,然後把程式碼也展示一下吧

Matlab GMM實現

麻雞,再次吐槽這個配色,我不知道怎麼改啊 ,有沒有人會改 請給我留言,我看著這個配色好不爽

clear;

fr=imread('first.jpg');

% 讀取該影象作為背景

fr_bw1 = rgb2gray(fr);

% 將背景轉換為灰度影象

fr_size = size(fr);

%取幀大小

width = fr_size(2);

height = fr_size(1); %獲取原影象的尺寸

fg = zeros(height, width); %前景,讀取的第二張圖片獲得

bg_bw = zeros(height, width);%背景,讀取的第一張圖片獲得

fr_bw1 = double(fr_bw1);

% --------------------- mog variables -----------------------------------

C = 3; % 組成混合高斯的單高斯數目 (一般3-5)

D = 2.5; % 閾值(一般2.5個標準差)

alpha = 0.01; % learning rate 學習率決定更新速度(between 0 and 1) (from paper 0.01)

thresh = 0.25; % foreground threshold 前景閾值(0.25 or 0.75 in paper)

sd_init = 36; % initial standard deviation 初始化標準差(for new components) var = 36 in paper

w = zeros(height,width,C); % initialize weights array 初始化權值陣列

w(:,:,1) = 1;

w(:,:,2:C) = 2^-10; % 第一個高斯分佈的初始權重為1,其餘分佈的權重為0

mean = zeros(height,width,C); % pixel means 畫素均值

mean(:,:,1) = fr_bw1; % 第一個高斯分佈的初始均值為參考幀的值,其餘分佈的均值為0s

sd = sd_init*ones(height,width,C); % pixel standard deviations 畫素標準差

matchcnt = ones(height, width,C); % 匹配的次數,初始值都設為1

u_diff = zeros(height,width,C); % difference of each pixel from mean 圖片與高斯均值的差

fr = imread('second.jpg'); % read in frame 讀取幀

fr_bw = rgb2gray(fr); % convert frame to grayscale 轉換為灰度影象

fr_bw = double(fr_bw); % 將灰度圖值設定為雙精度

%求匯入進來的圖片與各個高斯均值的差

for m=1:C

u_diff(:,:,m) = abs(fr_bw - double(mean(:,:,m)));

end

% update gaussian components for each pixel 更新每個畫素的背景模型

%rank_ind = zeros(C,1);

for i=1:height

for j=1:width

match = 0; %畫素與高斯模型匹配的標識

match_ind = 0;%為該畫素最匹配的高斯模型的標號

for k=1:C %與第k個高斯模型進行比對,然後更新引數

if (abs(u_diff(i,j,k)) <= D*sd(i,j,k) && (match == 0)) % pixel matches component畫素匹配了高斯中的第k個模型

match = 1;

% variable to signal component match 設定匹配標記

match_ind = k;

% update weights, mean, sd, p 更新權值,均值,標準差和引數學習率

p = alpha/w(i,j,k); %理應使用p = alpha/gaussian才對,這裡勉強

w(i,j,k) = (1-alpha)*w(i,j,k) + alpha;

%p = alpha/w(i,j,k); %理應使用p = alpha/gaussian才對,這裡勉強

mean(i,j,k) = (1-p)*mean(i,j,k) + p*double(fr_bw(i,j));

sd(i,j,k) = sqrt((1-p)*(sd(i,j,k)^2) + p*((double(fr_bw(i,j)) - mean(i,j,k)))^2);

if matchcnt(i, j, k) ~= 255 % 匹配次數達到255就不加了。在實時視訊序列中,上限是必須的,否則可能溢位。

matchcnt(i, j, k) = matchcnt(i, j, k) + 1;

end

else

%當與第k個模型沒有匹配的話,則第k個模型所佔的比重自然而然地下降

w(i,j,k) = (1-alpha)*w(i,j,k); % weight slighly decreases 權值減小

end

end

% if no components match, create new component 如果沒有匹配的模型則建立新模型

if(match==0) % 沒有匹配的高斯,建立新的高斯取代:排序後排在最後面的那個

[min_w,min_w_index]=min(w(i,j,:));

matchcnt(i,j,min_w_index) = 1; % 匹配次數設為1,一個小值

w(i,j,min_w_index) = 1 / ( sum( matchcnt(i, j, :) ) - 1 );% 權值為其它高斯分佈匹配次數之和的倒數

mean(i,j,min_w_index)=double(fr_bw(i,j));

sd(i,j,min_w_index)=sd_init;

end

%無論匹配是否成功,都要將該畫素在不同模型上的權重標準歸一化

w_sum = sum(w(i, j, :));

w(i, j, :) = w(i, j, :) / w_sum;

%針對該畫素,計算多個模型的優先順序(依據權重)

rank = w(i,j,:)./sd(i,j,:);

[sorted_rank, rank_ind] = sort(rank, 'descend');

%將前景的初始值設定為255,即為白色;

fg(i,j) = fr_bw(i,j);

%當該畫素匹配成功的時候,利用高斯混合模型,將該畫素值重新設定

if(match == 1)

switch match_ind

case rank_ind(1)% 與最優的高斯匹配,肯定是歸為背景點

fg(i,j) = 0;

case rank_ind(2)% 與中間的高斯匹配,如果最上面一個高斯的權值小於thresh,則這點歸為背景點

if w(i, j, rank_ind(1)) < thresh

fg(i, j) = 0;

end

case rank_ind(3)% 與最下面的高斯匹配,如果最下面的高斯權值大於1-thresh(或者前兩個高斯權值和小於thresh),則這點歸為背景點

if w(i, j, rank_ind(3)) > 1 - thresh

fg(i, j) = 0;

end

end

end

for k=1:C

bg_bw(i,j) = bg_bw(i,j)+ mean(i,j,k)*w(i,j,k);%更新背景

end

% % 根據rank的排序結果調整引數的順序

% tmp_T = [mean(i, j, :); sd(i, j, :); w(i, j, :); matchcnt(i, j, :)]; % 為了排序時,幾個引數同步調整,所以組合在一起

% mean(i, j, :) = tmp_T(1, rank_ind); %即同時利用rank,將mean進行了排序

% sd(i, j, :) = tmp_T(2, rank_ind); %同理

% w(i, j, :) = tmp_T(3, rank_ind); %同理

% matchcnt(i, j, :) = tmp_T(4, rank_ind);%同理

% if w(i, j, 1) > thresh %使用大於閾值的進行背景構造即可

% bg_bw(i, j) = w(i, j, 1) * mean(i, j, 1);

% else

% if w(i, j, 1) + w(i, j, 2) > thresh

% bg_bw(i, j) = w(i, j, 1) * mean(i, j, 1) + w(i, j, 2) * mean(i, j, 2);

% else

% bg_bw(i, j) = sum(w(i, j, :) .* mean(i, j, :));

% end

% end

end

end

figure(1),subplot(3,1,1),imshow(fr); %顯示輸入影象

subplot(3,1,2),imshow(uint8(bg_bw)); %顯示背景影象

subplot(3,1,3),imshow(uint8(fg)); %顯示前景影象

轉載請附本部落格連結地址:

https://blog.csdn.net/weixin_38285131/article/details/83721069